Docker-based workers»

Spacelift Docker-based workers consist of two main components: the launcher binary, and the worker binary. The launcher is responsible for:

- Downloading the correct version of the worker binary to be able to execute Spacelift runs.

- Starting new Docker containers in response to those runs being scheduled.

We suggest using our Terraform modules to deploy your workers, but you can also follow our instructions on manual setup if you need to deploy workers to an environment not supported by our Terraform modules.

Terraform modules»

For AWS, Azure, and GCP users, Spacelift provides an easy way to run worker pools. The spacelift-io/spacelift-worker-image repository contains the code for Spacelift's base virtual machine images, and the following repositories contain Terraform modules to customize and deploy worker pools to AWS, Azure, or GCP:

- AWS: terraform-aws-spacelift-workerpool-on-ec2

- Azure: terraform-azure-spacelift-workerpool

- GCP: terraform-google-spacelift-workerpool

Info

AWS ECS is supported when using the EC2 launch type, but Spacelift does not currently provide a Terraform module for this setup.

Add certificates to the OpenTofu/Terraform process»

OpenTofu and Terraform run as separate processes inside a Docker container and read certificates from the system certificate store (/etc/ssl/certs/ca-certificates.crt). If your OpenTofu/Terraform configuration needs to communicate with services that use a custom certificate authority (for example, a private provider registry, a custom state backend, or a private module source), you need to supply those certificates to the system store inside the runner container.

The approach is to create an extended CA bundle on the worker host, and then use SPACELIFT_WORKER_EXTRA_MOUNTS to mount it into the runner container.

First, Prepare your custom CA certificate.

Place your CA certificate file on your local machine (e.g., custom-ca.pem).

Second, Create an extended CA bundle.

Download the existing CA certificate bundle from the runner image and append your custom certificate:

1 2 | |

Tip

If you are using a custom runner image, replace public.ecr.aws/spacelift/runner-terraform:latest with your image. The certificate bundle path may differ depending on the base image (e.g., /etc/pki/tls/certs/ca-bundle.crt on Red Hat-based images).

Ensure the resulting custom-ca-bundle.crt ends with a newline.

Finally, Place the bundle on the worker host and configure the mount.

The bundle needs to be available on the EC2 instance at a known path, and then mounted into the runner container using SPACELIFT_WORKER_EXTRA_MOUNTS.

Use the configuration variable to write the bundle to disk during instance startup, and set the mount:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | |

Copy the custom-ca-bundle.crt file to the worker host (for example, to /etc/spacelift/custom-ca-bundle.crt), and export the mount before starting the launcher:

1 2 | |

Info

This mounts the custom bundle as a bind mount into every runner container. The mount overrides the container's built-in /etc/ssl/certs/ca-certificates.crt, so all TLS clients inside the container (including OpenTofu, Terraform, and any providers) will trust your custom CA.

Warning

If you update your runner image, the built-in CA certificates in the image may change. You should regenerate your extended CA bundle whenever you update your runner image to ensure it includes the latest upstream CA certificates alongside your custom ones.

Manual setup»

Prerequisites»

The launcher expects to be able to write to the local Docker socket. Make sure that Docker is installed and running.

Downloading the launcher»

The launcher is distributed as a statically-linked Go binary, and we provide slightly different versions depending on whether you are using one of our standard environments (for example app.spacelift.io or us.app.spacelift.io) or our FedRAMP environment. The key difference is that the FedRAMP version disables some of the telemetry we use to investigate customer issues.

For each environment, we provide amd64 and arm64 versions, so that you can use the correct version depending on the architecture you use. Please download the appropriate version for your Spacelift environment and host architecture.

Tip

In general, arm64-based virtual machines are cheaper than amd64-based ones, so if your cloud provider supports them, we recommend using them. If you choose to do so, and you're using custom runner images, make sure they're compatible with arm64.

All Spacelift provided runner images are compatible with both CPU architectures.

Running the launcher»

Run the launcher binary using the following commands, replacing <worker-pool-id> with the ID of your pool:

1 2 3 | |

These commands set the following environment variables and then execute the launcher binary:

SPACELIFT_TOKEN: The token you received from Spacelift on worker pool creation.SPACELIFT_POOL_PRIVATE_KEY: The contents of the private key file you generated, in base64.

Info

You need to encode the entire private key using base64, making it a single line of text. The simplest approach is to run cat spacelift.key | base64 -w 0 in your command line. For Mac users, the command is cat spacelift.key | base64 -b 0.

Your launcher should now connect to the Spacelift backend and start handling runs.

Periodic updates»

Our worker infrastructure consists of two binaries: launcher and worker. The setup for the binaries generally follows this process:

- The latest version of the launcher binary is downloaded during the instance startup.

- The launcher establishes a connection with the Spacelift backend and waits for messages.

- When it gets a message, the launcher downloads the latest version of the worker binary and executes it.

- The worker binary executes the actual Spacelift runs.

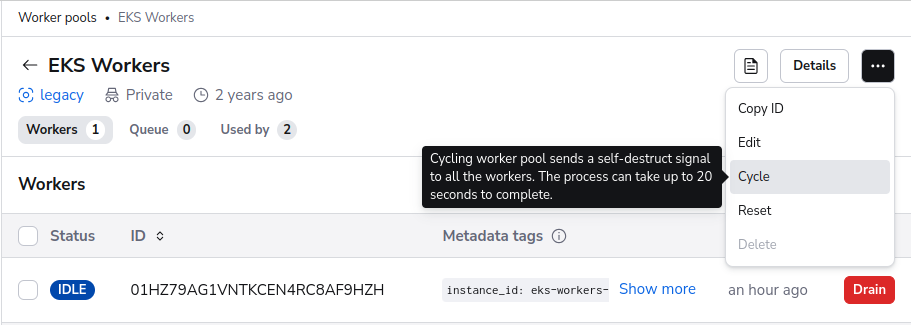

This setup ensures that the worker binary is always up to date, but the launcher may not be. Typically, the worker binaries receive more updates but we still recommend to recycle the worker pool every once in a while to ensure that the launcher is up to date. The simplest way to do this is via the Cycle option on the worker pool page:

AMI updates & deprecation policy»

If you run your workers in AWS and use the Spacelift AMIs, update your worker pool routinely as they receive weekly updates to ensure all system components are up-to-date.

Currently, the AWS AMIs are set to be deprecated in 364 days after its release and removed after 420 days. You won't be able to start new instances using a deprecated AMI, but existing instances will continue to run.

EC2 Spot Instances»

The AWS Terraform module supports EC2 Spot Instances for up to 90% cost savings.

Not recommended for production/critical workloads

Spot instances are NOT recommended for critical or production workloads as they can be interrupted with only 2 minutes notice, potentially causing:

- Incomplete or corrupted Terraform state.

- Failed deployments leaving infrastructure in inconsistent state.

- Loss of work-in-progress for long-running operations.

Use Spot instances only for development, testing, or fault-tolerant workloads where interruption is acceptable, for example: ephemeral environments, Terraform modules, or operations with guaranteed runtimes under one minute.

1 2 3 4 5 6 7 8 9 10 | |

The Spacelift worker includes graceful interruption handling: it monitors for spot interruption notices and allows running jobs to complete when possible. However, if a run doesn't complete within the 2-minute interruption grace period, it will be abruptly terminated and crash.

Use the AWS EC2 Spot Instance Advisor to select cost-effective instance types with lower interruption rates. See the spot instances example for more configuration options.